Fake Google reviews distort reputation signals, weaken SERP evaluation, and amplify misleading perceptions of businesses at scale. Reputation management is the process of controlling, correcting, and stabilising perception across digital information systems, and online reputation refers to how search engines, users, and networks interpret a business’s credibility from its visible digital footprint.

What are fake Google reviews and how do they work?

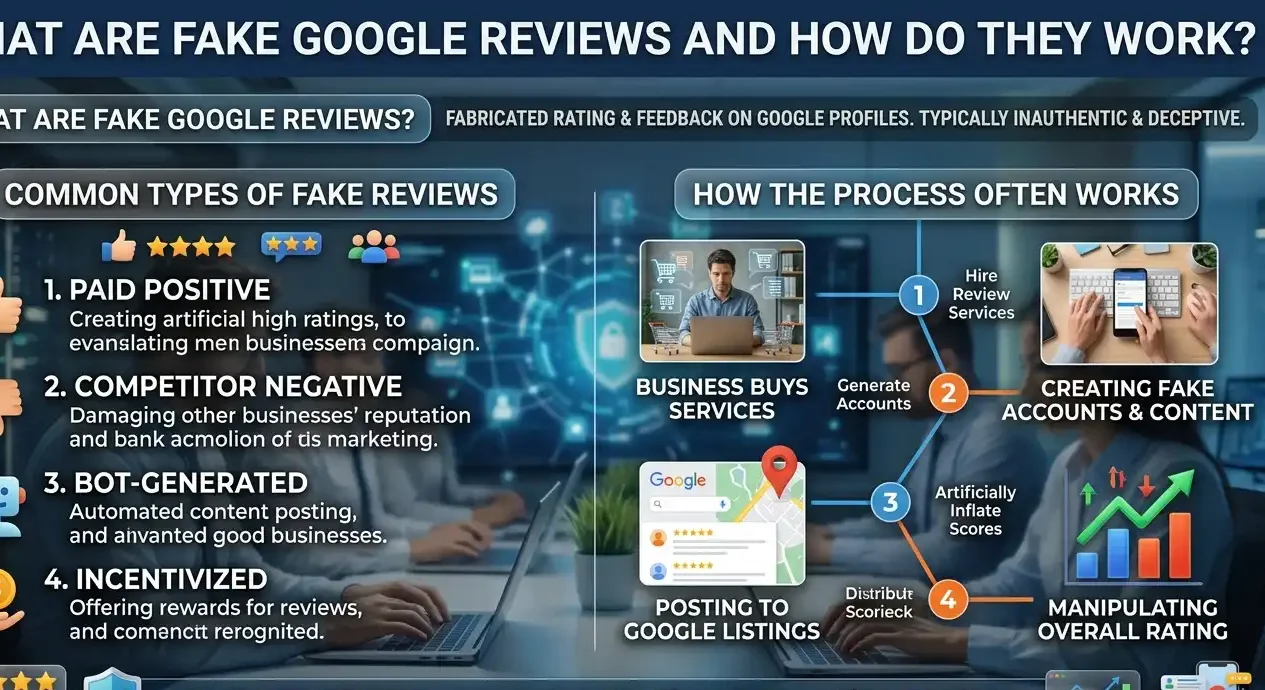

Fake Google reviews are manufactured or incentivised ratings and comments that do not reflect genuine user experience, and they manipulate how search engines and consumers interpret a business’s trust and service quality.

Fake Google reviews are defined within search ecosystems as non‑authentic feedback patterns that artificially inflate or deflate star ratings and sentiment. They operate by using bulk accounts, duplicate listings, or third‑party services that publish text that mimics natural language but is generated, copied, or seeded rather than spontaneous.

Dive Deeper With Our Expert Guides and Related Blog Posts:

Why Restaurant Reputation Management Starts With How You Appear on Google

How Online Review Management Shapes Customer Decisions Before First Contact

Search engines interpret review signals via multiple layers: author history, review frequency, text‑pattern similarity, and interaction behaviour. When a cluster of near‑identical reviews appears in a short window, or when the same account writes 30–40 reviews in one day across unrelated businesses, the system registers anomaly‑like signals. These patterns are not unique, but they are statistically distinguishable from genuine feedback.

The impact on SERP evaluation is measurable. Listings with spiked‑fake positive reviews often climb in local‑search visibility temporarily, because algorithms interpret high‑volume‑positive signals as strong engagement. Listings buried under orchestrated negative reviews can drop in ranking velocity and appear lower even when their service quality is stable. This artificial distortion undermines the feedback‑based credibility model that local search and consumer‑protection frameworks aim to uphold.

How do fake reviews influence search visibility and entity perception?

Fake reviews influence search visibility and entity perception by generating false reputation signals that search systems use to rank, prioritise, and label local business profiles in the UK.

Search visibility depends on how reputation signals align with established trust thresholds. Fake reviews flood the review layer with misaligned data that does not reflect actual customer‑experience patterns. When a business has 80 new 5‑star reviews in 48 hours, or 40 identical 1‑star reviews in 72 hours, search systems begin to question the legitimacy of all reviews attached to that listing.

Entity perception refers to how users form a mental model of a business after seeing its digital representation. When a user sees a 4.7‑star average with 300 recent reviews, they interpret that as a sign of reliability and popularity. If those reviews are fake, the user’s perception overestimates or underestimates the business’s real‑world performance. This misalignment harms both trust and decision‑making, even when the listing is accurate in other fields.

Search engines also evaluate reputation through review‑history curvature. A steady, natural‑looking curve of 4–4.5 stars with gradual growth signals stability. A sudden spike to 4.9 or 3.2 following a news‑event, competitor turmoil, or rating‑attack creates a spike‑pattern that does not map to organic service‑quality changes. The system marks these curves as manipulation‑candidates, which can lead to review clusters being de‑emphasised in SERP interpretation.

How does Google interpret review signals and fake‑review patterns?

Google interprets review signals and fake‑review patterns by analysing review velocity, author‑profile history, text‑pattern similarity, and interaction behaviour across Maps and Search.

Google defines review signals as structured feedback data that includes star ratings, text, timestamps, and behavioural metadata from users who search, click, call, or visit a business listing. The system analyses each review as a composite signal: the rating provides a quantitative anchor, the text offers qualitative context, and the author‑behaviour traces credibility traces across the platform.

To detect fabrication, Google evaluates author‑reputation patterns. Accounts that create 20–50+ reviews in a short window, target specific businesses repeatedly, or post repetitive phrasing activate anomaly‑identification rules. The system also checks language‑model similarity, identifying cases where multiple reviews match common promotional or scripted templates instead of idiosyncratic user‑style patterns.

Review velocity is another key metric. A listing with 120 reviews in 30 days where 95% are 5‑star, all written in similar style, and lacking nuanced detail exhibits a velocity‑profile that differs from authentic, slower‑growing review curves. Google’s systems flag high‑velocity, low‑variance clusters for further scrutiny and may reduce their weight in SERP evaluation.

The impact on reputation interpretation is not binary. The system does not simply delete or accept reviews. It recalibrates the weight of entire clusters, sometimes deprioritising them in ranking models, sometimes adding a warning‑layer, and sometimes limiting their influence on organic and local‑ranking formulas. The outcome is a weakened or skewed representation of the business in the SERP.

How do fake reviews affect UK consumer‑trust and decision‑making?

Fake reviews distort consumer‑trust and tilt decision‑making because they create misleading reputation signals that users treat as evidence of quality and reliability.

Consumer‑trust refers to the degree of confidence a user has in a business before transacting, and it relies heavily on visible digital‑reputation signals. In the UK, users checking Google reviews expect to see an honest aggregate of recent experiences. When that aggregate is artificially inflated or weaponised, users anchor their trust on false assumptions instead of real‑experience data.

Decision‑making is shaped by the star‑rating layer and review‑snippet layer. A listing with 4.8 stars and 400 mostly positive reviews signals safety and competence. Fake‑review clusters can push that average into the 4.7–4.9 range without reflecting actual performance. Users who rely on this metric overestimate quality, which leads to higher‑frustration and complaint‑intensity when expectations are unmet.

The same applies to negative fake reviews. A coordinated 1‑star attack can drag a 4.4‑ster rating down to 3.5–3.8 in a short window. This artificial drop makes the business appear riskier than it actually is, which can reduce bookings, orders, and footfall. The distortion is not limited to the listing itself. It contaminates the information that appears in competing search queries, related‑business‑clusters, and map‑cluster SERPs.

Search‑ecosystem effects deepen the problem. When a listing carries a heavily‑manipulated reputation, surrounding listings in the same category may appear more stable by comparison, even if they are unchanged. This shifts user‑attention and trust towards competitors that may not be objectively better. The system’s attempt to model fairness encounters behaviours that are deliberately unfair.

How do search engines distinguish fake reviews from legitimate feedback?

Search engines distinguish fake reviews from legitimate feedback by combining statistical‑pattern‑detection, behavioural‑authentication, and text‑analysis to identify anomaly‑like reputation signals.

Legitimate feedback refers to user‑driven, non‑incentivised, non‑scripted comments that reflect organic experience with a business. These reviews usually show variation in structure, length, and sentiment. Some users emphasise price, some focus on staff behaviour, and some describe one‑off‑incidents. The system expects a degree of linguistic diversity and event‑specificity.

Fake‑review detection operates through several mechanisms. First, the system analyses text‑pattern similarity. When 12 of 15 reviews in a 3‑day window use the same adjectives, the same exclamations, or the same story‑template, the system flags low lexical‑diversity. Second, it checks review‑velocity and listing‑history. Rapid‑rating‑spikes that do not match verified‑booking‑data or transaction‑timing are treated as suspicious.

Author‑behaviour is another layer. The system tracks how many businesses a user reviews, how often, and how long they have been active. Accounts that appear suddenly, post reviews across dozens of unrelated categories, or mirror each other’s phrasing raise red‑flag signals. The system also monitors interaction‑behaviour: legitimate users may click through to call, drive, or book; fabricated accounts often show no post‑review‑action patterns.

The impact on SERP evaluation is subtle but measurable. When clusters of fake reviews are detected, the system may reduce their influence on the star‑average, de‑prioritise certain clusters, or add a trust‑indicator that limits how heavily they affect ranking. In some cases, distorted listings receive lower‑visibility treatment, which reduces the SERP impact of the manipulation. The result is a partial but not complete correction of the forged reputation signal.

How does content and review indexibility affect reputation in SERPs?

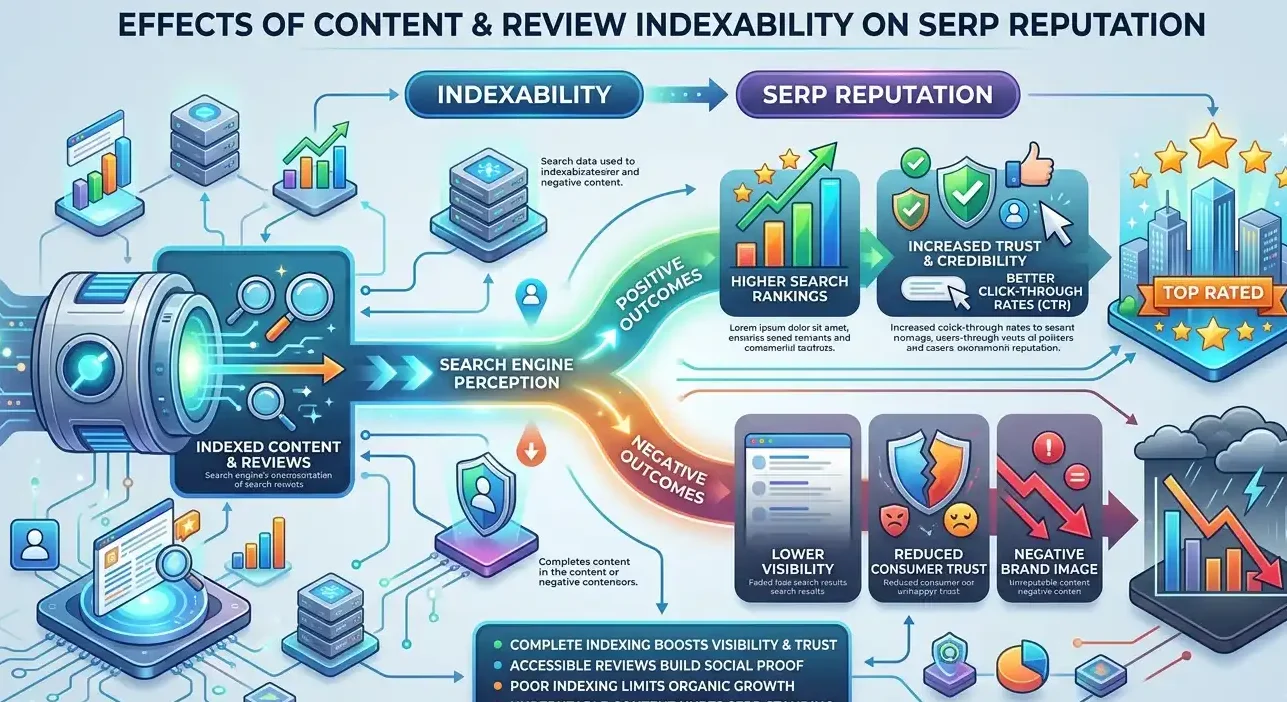

Content and review indexibility affect reputation in SERPs because search engines only interpret visible, accessible, and structured information when constructing entity‑perception models.

Indexibility refers to the technical and behavioural conditions under which content is discoverable, parseable, and usable by search systems. Reviews that are not blocked by robots.txt, hidden behind JavaScript barriers, or gated by login‑walls are more likely to be properly indexed and integrated into reputation‑models. Accessible, structured reviews contribute directly to star‑average calculations, review‑curve models, and sentiment‑graphs that the system uses to represent the business.

Search engines rely on schema‑structured markup for many local businesses. The presence of clear review‑count, rating‑value, and author‑markup helps the system interpret ratings faster and with higher confidence. When reviews lack structure, appear in dynamic widgets, or are embedded in non‑indexable components, the system must infer values from text‑patterns, which increases the chance of misclassification and mis‑weighting for UK consumer protection law.

The impact on entity perception can be large. A business with 500 indexed, structured reviews appears more mature and credible than one with only 70 visible reviews, even if both are equally legitimate. When fake reviews succeed in being indexed, they inflate the apparent volume and sentiment, which pulls the system’s trust‑curve away from the businesses’ actual experience. The SERP‑level representation becomes an artificial version of the entity.

The opposite is also true. Some businesses do not actively manage their digital‑presence, so their review layer is sparse or fragmented. The system cannot build a stable reputation‑model from a small or scattered data set. Perception becomes under‑determined, which can push the listing closer to lower‑trust thresholds than it would occupy if it had a fuller, cleaner review footprint. The indexibility gap creates perception‑blind‑spots.

How do authority, trust, and review signals build entity credibility in search?

Authority, trust, and review signals combine to build entity credibility by giving search systems structured, verifiable, and user‑driven evidence that the business is reliable, visible, and relevant.

Authority refers to how search engines interpret the strength and relevance of a business’s digital footprint across domains, directories, and citations. A strong authority profile includes consistent NAP (name, address, phone), multiple citations, and links from relevant local and national sites. Authority alone is not sufficient for trust; it is a structural signal that the system re‑weights with user‑driven feedback.

Trust reflects how likely the system considers it that the business accurately represents itself and delivers on its promises. Trust signals come from verified‑listings, consistent information, and low‑fraud‑and‑complaint‑incidence patterns. When users repeatedly confirm that a listing matches reality, the system boosts its perceived trustworthiness. Fake reviews attempt to shortcut this process by inserting false‑positive or false‑negative signals without genuine experience to back them.

Fake Google reviews do not merely mislead individual users. They contaminate the reputation‑signals that search engines and information networks rely on to rank, label, and recommend businesses. Understanding how review indexing, velocity, and pattern‑detection work reveals why these manipulations have such strong effects on visibility and trust. The long‑term resolution lies in better signal‑authenticity, not just in removal or suppression, because the system’s ability to distinguish genuine from fabricated reputation is central to the stability of online credibility.