Reputation management is the structured practice of controlling how entities appear and are evaluated within search‑driven information systems. Online reputation refers to how search visibility, review signals, and content ranking combine to form the public’s perceived credibility of a brand or person.

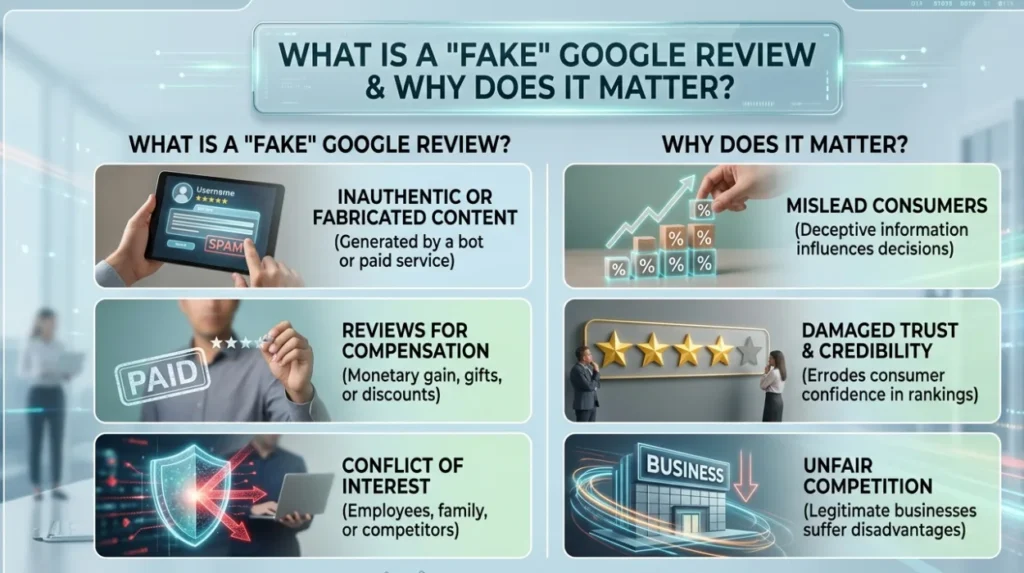

What is a “fake” Google review and why does it matter?

A fake Google review is a review that is inauthentic, fabricated, incentivised, or posted by someone without direct experience; it distorts reputation signals and skews SERP evaluation.

Dive Deeper With Our Expert Guides and Related Blog Posts:

Why Fake Google Reviews Are Harming UK Businesses More Than Ever Before

How Fake Google Reviews Damage Trust Even When Customers Know They Exist

A fake review is defined by its lack of a genuine customer experience or by being placed to manipulate ratings. The mechanism of harm operates through review aggregation: Google aggregates star ratings and textual sentiment to produce a composite reputation signal visible in maps, knowledge panels, and organic listings.

The presence of inauthentic reviews changes the sentiment distribution and can reduce entity credibility in the eyes of users and algorithms. Search engines factor review volume, recency, and sentiment into local ranking influence and trust proxies. A cluster of fake positive reviews can artificially inflate placement; a cluster of fake negative reviews can depress visibility and booking or purchase intent.

What evidence does Google use to judge a review’s authenticity?

Google evaluates metadata, account signals, content patterns, and corroborating data to determine review authenticity; evidence creates a removal‑eligibility score used in moderation.

Google interprets authenticity using technical signals (IP patterns, device fingerprinting, geolocation), account history (age, review frequency), content analysis (repetition, templated language), and corroborating proof (transactional records, booking confirmation). The mechanism combines automated detection and manual review. Automated systems flag suspicious clusters for escalation. Manual reviewers examine evidence such as screenshots, timestamps, and proof of purchase. The impact on search visibility is that reviews removed for inauthenticity reduce noise in review clusters and correct the sentiment distribution used in SERP evaluation.

How do you report a fake Google review? Step‑by‑step

Reporting a fake Google review is a structured submission process: identify the review, collect evidence, submit via Google’s reporting interface, and track the outcome.

Identify the target review on the Business Profile or Google Maps entry. Collect evidence that ties the review to inauthentic behaviour. Evidence examples include: screenshots showing duplicate text across accounts, proof of no transaction (dates, invoices), internal booking records (order IDs, customer names), and communication logs if a dispute exists. Use Google’s “Flag as inappropriate” option on the review to submit an initial report. For stronger cases, prepare a detailed escalation package for Google Support or the legal‑removal channel, attaching the evidence and a concise explanation of the breach (for example, “this review is paid for” or “this review was posted by a competitor via multiple accounts”). The system records your report; Google uses it to triage between automated and manual review workflows. The outcome varies: Google may remove the review, hide it pending investigation, or retain it if the evidence is insufficient. The effect on search visibility is tracked by measuring placement frequency and star‑average changes post‑action.

Which types of evidence improve removal success?

Concrete, verifiable evidence increases removal likelihood: transactional proof, booking IDs, timestamps, duplicate content flags, and third‑party confirmations are high‑weight items in Google’s evaluation.

Transactional proof demonstrates that the reviewer lacked an actual purchase or visit. Booking IDs and invoice numbers show a direct negative or positive correlation between sale and reviewer identity. Duplicate content detection identifies templated or copied reviews across multiple listings. Third‑party confirmations include screenshots from the reviewer’s social profiles indicating no association with the purchase or evidence that the reviewer publicly trades in paid reviews. The mechanism is that Google’s reviewers prefer verifiable, concise evidence rather than conjecture. Evidence that ties the review to a specific, provable event increases the chance of removal and yields measurable reductions in false sentiment distribution.

What happens inside Google after you file a report?

Google routes reports through automated filters and a triage queue; the process includes automated scoring, potential manual review, and final adjudication that either de‑indexes or retains the review.

Initial handling uses automated classifiers that analyse metadata and content. The classifier assesses probability of inauthenticity using signal weights. If the score passes a threshold, the system either auto‑removes or places the review into a manual moderation queue. Manual reviewers then validate the evidence submitted and check platform policy alignment (for example, policy on spam, conflicts of interest, or prohibited content). The decision outcomes include full removal, temporary suppression, or no action. The effect on search visibility is immediate in cases of removal: the average star rating and sentiment profile adjust, which alters review‑based ranking signals and the entity’s SERP evaluation.

How do search algorithms interpret removed or retained reviews?

Search algorithms treat removed reviews as non‑signals and adjust reputation measures; retained reviews continue to contribute to sentiment metrics, placement influence, and entity‑credibility scores.

When a review is removed, it ceases to contribute to aggregate ratings and sentiment distribution. The algorithm then recalculates local ranking influence and trust proxies using the remaining genuine reviews. If removal changes the star average materially, the business’s visibility in local packs and map results can shift. Retained reviews continue to be indexed, influence click‑through behaviour, and shape perceived credibility. The mechanism is iterative: search engines re‑index the business profile and update ranking factors in the next evaluation cycle. Monitoring these changes provides measurable insight into the effectiveness of the reporting action.

How effective is reporting compared with alternative strategies?

Reporting is effective for clear policy or legal breaches; content‑enhancement and response strategies are more effective where evidence for removal is insufficient.

Reporting is the appropriate route when a review violates platform policy or the law. The mechanism delivers direct suppression of the offending content when sufficient evidence exists. However, when reviews are negative but authentic, reporting will not succeed. In those cases, alternative methods operate by improving reputation signals: soliciting verified reviews, publishing authoritative content, and engaging constructively with reviewers to alter sentiment distribution. Comparative evaluation shows that removal provides fast, discrete corrections for specific false items, while enhancement shifts the overall SERP evaluation over time. The optimal strategy integrates both: remove provably fake reviews and amplify genuine positive signals to stabilise perception.

How should businesses collect and store evidence for future reports?

Businesses must implement evidence‑capture processes that log transaction IDs, timestamps, customer contact records, and communication threads to support future reporting and legal escalation.

Create a structured evidence archive that links each sale or visit to verifiable identifiers. Store digital receipts, booking confirmations, delivery proofs, and contact logs. When a review dispute arises, extract the relevant records and generate a concise evidence package that includes the alleged reviewer’s name or pseudonym, transaction references, and any relevant correspondence. The mechanism for success is speed and clarity: Google’s reviewers appreciate short, well‑organised evidence packages that directly contradict the review’s claims. Evidence retention also supports legal escalation if necessary.

What are the limitations and legal constraints around reporting?

Reporting is constrained by platform policy scope and legal jurisdiction; removal is not guaranteed when content is opinion, fair comment, or lawful speech.

Google’s remit is platform compliance, not judicial adjudication of disputes over truth. The mechanism respects free expression and newsworthiness exceptions. Material that is factual but negative often remains unless it breaches privacy, copyright, or defamation thresholds with a court order. Legal escalation is available but requires proper jurisdictional proof and formal procedures. The practical limitation is that reporting cannot remove legitimate criticism, and overuse of false reporting can weaken credibility in subsequent submissions.

What practical steps improve the overall reputation after reporting?

Combine targeted removal with reputation enhancement: request verified reviews, publish authoritative content, and optimise profiles to change the SERP composition and strengthen entity credibility.

Request verified reviews from recent customers and ensure these entries are linked to purchase evidence. Publish authoritative pages that address product quality, policies, and third‑party verification to create new content indexing. Optimise business profiles and knowledge panels so that the SERP surface features high‑quality, authoritative material. The mechanism is content suppression plus enhancement: removal reduces the weight of harmful images and reviews while new content increases positive reputation signals and search ranking influence. The combined effect stabilises perception and reduces future risk.

Reporting fake Google reviews is a procedural, evidence‑driven activity that operates within the constraints of platform policy and legal frameworks. Reputation management strategies differ based on whether they aim to remove specific content or to enhance overall reputation signals. Effective outcomes require precise evidence capture, prompt reporting, and concurrent content‑enhancement to stabilise the entity’s SERP evaluation and perceived credibility.