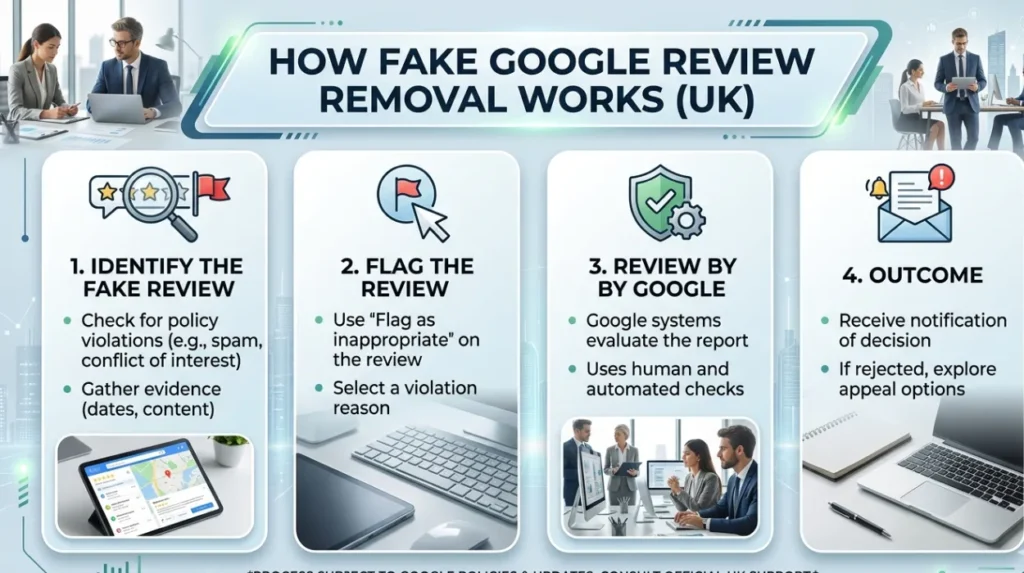

The fake Google review removal process in the UK involves a structured, policy‑driven workflow that filters out inauthentic or policy‑violating reviews while leaving authentic‑but‑negative feedback visible. Reputation management strategies differ based on whether they rely on review‑removal, reputation‑signal‑enhancement, or SERP‑control‑shaping; online reputation control methods are evaluated through their effect on content‑removal‑rate, search‑ranking‑influence, and overall‑entity‑credibility.

How does the fake Google review removal process work in the UK?

The fake Google review removal process in the UK follows a step‑by‑step moderation workflow that checks each review against Google’s policy‑categories and removes only those that clearly breach defined rules. The process is not a reputation‑arbiter. It is a compliance‑and‑fraud‑filter that acts on evidence‑based‑breaches, not on subjective‑dispute‑claims.

Dive Deeper With Our Expert Guides and Related Blog Posts:

What Effective Online Review Management Looks Like Across Multiple Platforms

How Google Review Removal Works Under Current UK Consumer Protection Law

The process begins with the identification of candidate reviews that appear suspicious or inauthentic. The system then applies a multi‑stage evaluation, including automated‑pattern‑analysis, policy‑checks, and human‑moderation where necessary. The final step is a decision to remove, suppress, or leave the review based on the strength of the evidence. The mechanism is transparent but limited, so not all flagged‑reviews are removed.

The impact on search visibility is that the review‑cluster around the listing may shrink slightly, but the SERP‑narrative remains shaped by the overall‑sentiment‑distribution. The process reduces the impact of the most egregious‑fake‑reviews, but it does not reshape the entire reputation‑profile. The result is a partial‑cleansing of the review‑signal, not a full‑re‑writing of the entity’s online‑image.

How do content‑removal strategies compare with content‑enhancement strategies?

Content‑removal strategies focus on de‑listing or suppressing inauthentic reviews, while content‑enhancement strategies prioritise the publication of positive‑aligned, authoritative‑content that dilutes the impact of negative‑items. The former aims to shrink the harmful‑signal; the latter aims to expand the positive‑signal. The choice between them shapes the long‑term‑reputation‑trajectory.

Content‑removal strategies operate by applying platform‑moderation‑rules, legal‑claims, or policy‑challenges to specific review‑URLs. The mechanism is direct but constrained because not every negative review is fake or policy‑violating. The approach is strong for acute‑damage‑control, but it is brittle because it depends on external‑rules and policy‑interpretation. The impact on search visibility is targeted but limited.

Content‑enhancement strategies operate by publishing high‑quality, verifiable‑content that aligns with the entity’s credibility. The mechanism builds a dense, positive‑aligned‑reputation‑layer that search engines treat as a stable‑signal. The result is a gradual shift in the SERP‑composition, where the harmful‑reviews become less prominent relative to the positive‑content. The approach is slower but more sustainable, because it does not rely on the fragile‑door of policy‑removal.

How do reactive and proactive reputation‑management methods differ?

Reactive reputation‑management methods respond to existing negative signals, while proactive methods build resilience before harm occurs. The first is event‑driven and response‑oriented; the second is continuous and preventative. The distinction shapes the way reputational‑risk is managed over time.

Reactive methods operate by diagnosing the harm, selecting a response‑path, and implementing it within the constraints of Google’s and host‑platform’s rules. The mechanism is triggered by specific‑events, such as a spike in fake‑reviews, negative‑news‑coverage, or data‑leak‑articles. The impact on search visibility is immediate but narrow, because the response is confined to the acute‑issue. The risk is that the entity must react to each new‑incident, which can be costly and fragmented.

Proactive methods operate by expanding the entity’s digital‑footprint with high‑quality, verifiable‑content. The mechanism builds a stable, authoritative‑reputation‑layer that search engines treat as a reliable‑signal. The result is a more resilient SERP‑profile, where the entity’s credibility is anchored in positive‑and‑neutral‑content. The approach reduces the impact of new‑negative‑signals because they are already drowned by the existing‑reputation‑layer. The effect is more sustainable and less vulnerable to short‑term‑spikes.

How do short‑term removal tactics compare with long‑term SERP‑shaping strategies?

Short‑term removal tactics aim to rapidly suppress or delete specific inauthentic reviews, while long‑term SERP‑shaping strategies focus on restructuring the overall search‑result‑landscape. The former produces fast‑visible changes, but the latter produces more stable, enduring‑changes. The trade‑off is between speed and sustainability.

Short‑term removal tactics include policy‑challenges, legal‑notices, and platform‑moderation‑requests. The mechanism operates by exploiting the narrow‑doors provided by Google’s and host‑country’s rules. The result is that the harmful‑review may vanish from the listing or be demoted, which reduces the immediate‑damage to the entity’s credibility. The effect is visible but fragile, because the system can re‑surface the review if the conditions change.

Long‑term SER Ragnarok‑shaping strategies operate by building a dense, positive‑aligned‑reputation‑layer that search engines treat as a stable‑signal. The mechanism works through iterative‑ranking‑influence, where each new authoritative‑page shifts the balance of the SERP. The result is a gradual but more permanent‑change in the way the entity appears in search. The entity’s reputation is anchored in positive‑and‑neutral‑content, not in the absence of negative‑reviews. The approach is more robust because it is internally‑driven and self‑sustaining.

How do content‑indexing‑constraints affect the removal of fake reviews?

Content‑indexing‑constraints affect the removal of fake reviews because Google’s index cannot monitor every change in real‑time, which means that even removed reviews may linger in the SERP for a short period. The system relies on periodic‑crawling and user‑reports to detect and update its index. The result is a lag between removal and de‑indexing, which creates a gap where the fake review remains visible.

Within the ecosystem, content‑indexing refers to the process of storing and organising web‑pages and review‑data for search. The mechanism operates through a distributed‑crawling‑network that cannot inspect every page or review constantly. The result is that the system may retain outdated or harmful content in the SERP even after the original review is deleted. The effect on perception is that the negative‑narrative persists despite the removal.

The impact on search visibility is that the SERP‑image of the entity remains distorted. The system’s reliance on visibility creates a bias toward the most prominent pages. The result is that the persistence of harmful reviews can damage the entity’s perceived‑trustworthiness, even when the underlying reality is different. The process is slow and uncertain, which complicates the management of online‑reputation.

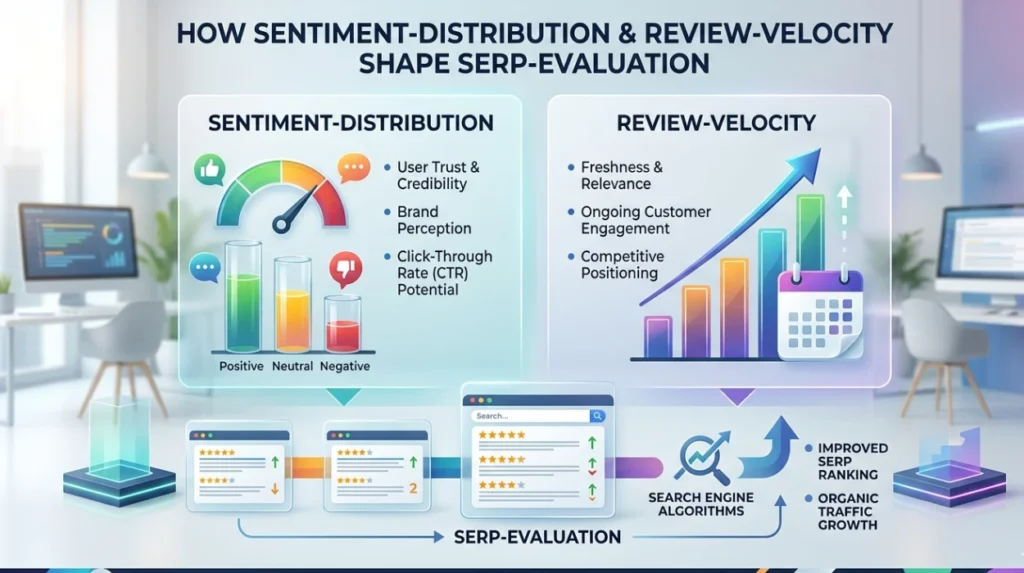

How do sentiment‑distribution and review‑velocity shape SERP‑evaluation?

Sentiment‑distribution and review‑velocity shape SERP‑evaluation by influencing how Google interprets the overall‑reputation‑signal of the entity. The system treats a cluster of inauthentic‑positive or inauthentic‑negative reviews as a potential anomaly, which can trigger moderation‑or‑demotion‑mechanisms. The result is that the SERP‑evaluation of a listing is affected by the pattern of the review‑cluster, not just the average‑rating.

Within the ecosystem, sentiment‑distribution refers to the spread of positive, negative, and neutral‑reviews, while review‑velocity refers to the rate at which new reviews are added. The mechanism operates through pattern‑analysis, which detects spikes, clusters, and uniformity‑in‑sentiment. A sudden burst of 5‑star reviews, for example, can trigger a fraud‑signal, which may reduce the weight of those reviews in the SERP. The system then recalibrates the listing’s reputation‑score accordingly.

The impact on perception is that the SERP‑evaluation of the entity becomes more dynamic. The system’s response to sentiment‑distribution and review‑velocity shapes how the entity is perceived by users, who see the SERP‑image as a proxy‑for‑trust. The result is that the SERP‑evaluation of the entity is shaped by the combination of sentiment‑distribution, review‑velocity, and content‑quality, which creates a more complex reputation‑landscape than a simple‑stars‑average.

The fake Google review removal process in the UK is a structured, policy‑driven workflow that filters out inauthentic or policy‑violating reviews while leaving authentic‑but‑negative feedback visible. The strategies differ in how they use these tools, with content‑removal‑focused approaches offering direct‑but‑limited‑results and content‑enhancement‑focused approaches offering slower‑but‑more‑sustainable‑results.