Fake Google reviews damage trust because they corrupt the reputation signals that search engines, users, and networks rely on to infer credibility, even when people are aware that fabrication may occur. Reputation management is the systematic control and optimisation of how entities appear in search‑driven information systems; online reputation refers to how search visibility, review signals, and content‑ranking patterns shape user perception of a business’s trustworthiness.

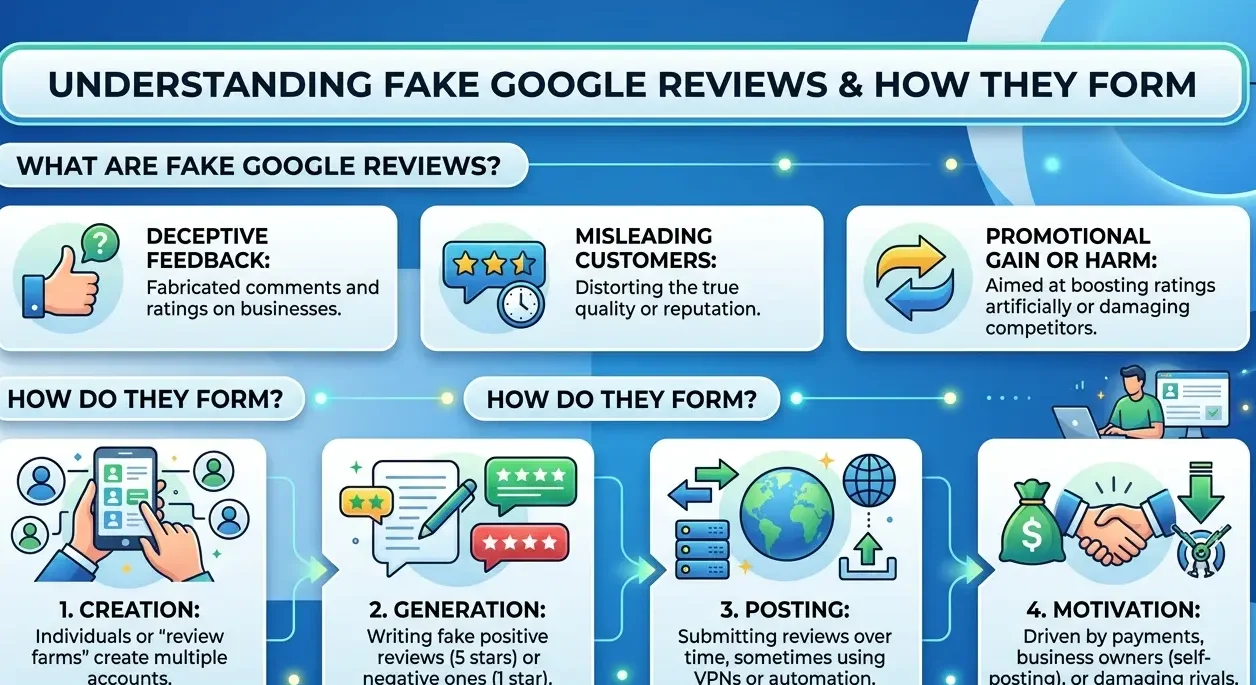

What are fake Google reviews and how do they form?

Fake Google reviews are inauthentic, incentivised, or manufactured user‑style ratings that search engines and users treat as genuine reputation signals despite their non‑organic origin.

Fake Google reviews refer to star‑rating and text‑feedback entries that are generated, coordinated, or paid for instead of arising from spontaneous user experience. Within search ecosystems, reviews defined as “fake” share patterns such as identical phrasing, timed‑clustered posting, or clear incentive‑language, which differ from naturally distributed, user‑driven feedback.

Dive Deeper With Our Expert Guides and Related Blog Posts:

How Online Review Management Shapes Customer Decisions Before First Contact

Why Fake Google Reviews Are Harming UK Businesses More Than Ever Before

The formation mechanism operates across three layers. First, actors create or reuse Google accounts with weak or inconsistent history and limited interaction‑behaviour. Second, they deploy bulk or scripted text that mimics the style of real reviews but lacks the idiosyncratic detail seen in genuine feedback. Third, they distribute these reviews across one or multiple listings to create a signal‑cluster that search systems interpret as strong engagement.

The impact on SERP evaluation is measurable. When a listing accumulates clusters of similar‑text, high‑volume reviews within short windows, Google’s systems detect velocity and pattern anomalies. The SERP may then recalibrate the weight of those clusters or mark them as low‑trust, but the artificial signal has already shaped the initial impression of the entity. The listing appears more credible or less credible than the underlying experience would justify.

How does search interpret trust and credibility from review signals?

Search interprets trust and credibility from review signals by combining star‑ratings, text sentiment, review‑history curves, and author‑reputation into a composite perception model of the business.

Review‑based trust refers to how search engines and users infer reliability from the volume, distribution, and consistency of user‑generated feedback. Within Google’s evaluation framework, reviews are treated as structured signals that include quantitative components (stars) and qualitative components (text, length, sentiment). The system also logs timestamps, posting frequency, and author‑behaviour patterns to assess authenticity.

The mechanism works in layers. The star‑layer defines the numeric average and variance; a 4.7‑star average with low variance signals stability, while frequent spikes or drops trigger anomaly‑detection. The text‑layer supplies semantic signals: detailed, event‑specific descriptions are treated as higher‑quality than generic, superlative‑driven content. The author‑layer evaluates profile‑history, review‑count, and cross‑listing behaviour to distinguish between credible users and coordinated accounts.

The impact on entity perception is significant. High‑volume, consistent‑positive review clusters push the SERP‑representation of the business into a higher‑trust band, even if some of the reviews are fake. The system weighs the cluster’s density and volume heavily, which can make the listing appear more reliable than a similar‑service‑quality competitor with a smaller, authentic‑only review base. The perception shift is built into the SERP before the user consciously evaluates the reviews.

Why do fake reviews harm trust even when users expect them?

Fake reviews harm trust because they create a persistent credibility gap between the visible reputation profile and the actual user experience, even when people are aware that manipulation may occur.

Credibility‑gap refers to the distance between the reputation signals that appear in the SERP and the actual quality of service or product. When users know that fake reviews exist, their baseline expectation shifts: they no longer treat 4.8 stars as absolute proof of quality but as a liable‑to‑be‑distorted indicator. The system still weights the visible signal; the user’s awareness cannot override the ranking‑logic that underpins search visibility.

The damage is twofold. First, the listing’s reputation is over‑ or under‑represented, which skews user‑decision‑making and erodes the predictive power of reviews as a trust‑tool. Second, the meta‑awareness that “reviews can be faked” weakens the entire review‑ecosystem as a trust‑source, not just the specific listing. The damage spreads beyond the targeted business to the wider search environment.

Search‑ecosystem‑level effects intensify the problem. When a cluster of fake reviews lifts a listing’s position in local search, surrounding competitors may appear relatively weaker even if their service quality is similar. The artificial signal cascades into discovery patterns, driving more traffic to the inflated‑reputation entity. The system’s attempt to model fairness encounters behaviours that deliberately distort the feedback‑layer.

How do fake reviews affect SERP evaluation and visibility?

Fake reviews affect SERP evaluation and visibility by artificially inflating or deflating review‑based ranking signals, which alters the way search engines prioritise and label local business profiles.

SERP evaluation depends on the weighted input of reputation signals, including review‑count, average‑rating, and sentiment‑distribution. Fake reviews insert non‑authentic data into this layer, which can cause the system to raise or lower the visible score without corresponding changes in service quality. A 4.3‑star business whose average is pushed to 4.7 via a 100‑review‑cluster appears more credible than its actual experience justifies.

The mechanism operates through pattern‑detection and recalibration. When Google’s systems detect clusters of similar‑text, high‑velocity, or low‑author‑history reviews, they may reduce the weight of those clusters or flag them for moderation. The listing’s star‑average may partially revert, but the transient inflation alters search‑visibility during the period it is active. The SERP‑position and click‑probability shift before the system corrects the signal.

The visibility‑impact can be long‑lasting. Even after a fake cluster is reduced or removed, the residual perception‑weight can linger in users’ memory and in secondary‑reference‑content. The reputational distortion becomes embedded in the information‑network, which search systems continue to interpret as part of the entity’s credibility profile. The decay‑process is slower than the inflation‑process.

How does search distinguish fake from authentic review patterns?

Search distinguishes fake from authentic review patterns by applying statistical‑pattern‑analysis, behavioural‑authentication, and text‑similarity metrics to detect anomaly‑like reputation signals.

Authentic reviews refer to user‑driven, non‑coerced feedback that is distributed over time, varies in detail, and aligns with verified‑interaction‑patterns. Within Google’s evaluation framework, authenticity is inferred from variability in phrasing, realistic event‑descriptions, and staggered posting‑intervals. The system expects a degree of lexical and temporal diversity across the review cluster.

The detection mechanism operates on several dimensions. First, the system analyses text‑similarity: clusters of reviews that share identical adjectives, sentence‑structure, or exclamation‑patterns trigger low‑diversity warnings. Second, it tracks review‑velocity; sudden spikes of 50–100 reviews in 48 hours are treated as suspicious, especially when posted by accounts with limited history. Third, it checks author‑behaviour; accounts that post hundreds of reviews across unrelated categories or mirror each other’s phrasing raise red‑flag signals.

The impact on SERP‑representation is partial but measurable. When clusters are detected as inauthentic, the system may reduce their weight in the star‑average, deprioritise them in feature‑exposure, or tag them for removal. The SERP‑display of the listing shifts toward a more realistic, but not fully‑corrected, reputation profile. The lingering signal‑remnants still influence how users interpret the business.

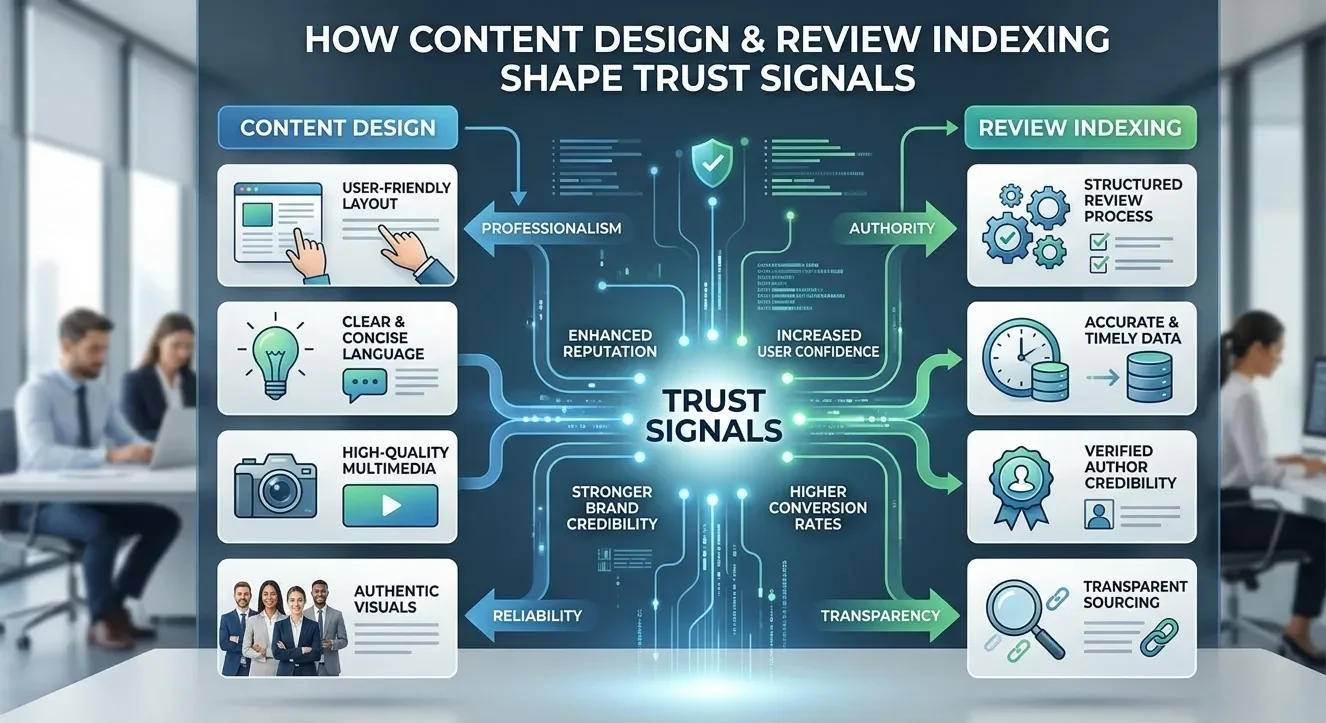

How do content design and review indexing shape trust signals?

Content design and review indexing shape trust signals by determining how much and what kind of reputation information search engines can access, interpret, and amplify in the SERP.

Review indexing refers to the technical conditions under which review text and metadata become visible and searchable within Google’s systems. Reviews that are structured with clear schema‑markup, accessible via standard HTML, and not blocked by no‑search‑index directives are more likely to be fully integrated into the listing’s reputation‑model. Conversely, reviews embedded in non‑indexable widgets or hidden behind interactive layers lose much of their influence on SERP‑evaluation.

Content design amplifies the trust‑signal further. Businesses that publish detailed, accurate service‑descriptions, consistent NAP (name, address, phone) data, and high‑quality visual content create a broader, more verifiable digital footprint. The system correlates this footprint with the review layer, reinforcing the perception of stability and transparency. The more coherent the overall content‑profile, the more reliable the review‑signal‑cluster appears, even when some reviews are inauthentic.

The combined effect on entity perception is substantial. A well‑structured listing with 500 indexed reviews, consistent metadata, and clear on‑site information occupies a higher‑trust band in the SERP than a sparsely‑reviewed, poorly‑structured profile. Fake reviews inserted into a strong, coherent footprint gain disproportionate weight because they sit on top of a solid reputation‑base. The design‑layer reinforces the illusion of credibility.

How does the review‑information environment affect broader digital trust?

The review‑information environment affects broader digital trust because it conditions user expectations, shapes search‑engine‑evaluation rules, and determines how heavily fake signals can distort perception.

The long‑term consequence is a more fragmented, but also more robust, trust‑ecosystem. Users rely on multiple signals to infer credibility rather than a single review‑score. The SERP integrates more external‑references, expert‑reviews, and institutional‑mentions into the entity‑perception layer. Fake reviews still distort, but the system and its users apply more sophisticated filters to mitigate the harm.

Fake Google reviews do not only mislead individual users; they corrode the reputation‑signals that search engines, networks, and consumers use to infer trust and quality. The visible distortion of ratings and sentiment changes how SERPs evaluate entities, how users allocate attention, and how the wider review‑ecosystem evolves. Even when people know that fabrication is possible, the persistent gap between perceived and real reputation undermines the reliability of the information environment. The long‑term task is not just to remove or suppress fake reviews, but to build a more resilient, multi‑source reputation‑framework that can withstand deliberate manipulation.